May

2026

One paper accepted to SIGGRAPH 2026 .

Dec

2025

Two papers accepted to CVPR 2026 . One paper accepted to 3DV 2026 .

Nov

2025

Our general whole-body control framework SONIC released on arXiv. Code and models coming soon!

Jun

2025

Three papers accepted to ICCV 2025 . See you in Hawaii!

Feb

2025

Two papers accepted to CVPR 2025 .

Feb

2024

Two papers accepted to CVPR 2024 .

July

2023

Two papers accepted to ICCV 2023 . See you in Paris!

Mar

2023

One paper on learning digital tennis player from videos accepted to SIGGRAPH 2023 (Best Paper Honorable Mention) .

Feb

2023

One paper on simulating pedestrian motions accepted to CVPR 2023 .

Sep

2022

One paper on embodied human pose estimation accepted to NeurIPS 2022 .

May

2022

Joined NVIDIA Research as a Research Scientist.

Apr

2022

Defended my Ph.D. thesis Unified Simulation, Perception, and Generation of Human Behavior .

Mar

2022

One paper on global human mesh recovery accepted to CVPR 2022 with an Oral Presentation .

Jan

2022

One paper on efficient automatic agent design accepted to ICLR 2022 with an Oral Presentation .

Jan

2022

Invited Talk at MPI Perceiving Systems .

Sep

2021

One paper on kinematics-guided control accepted to NeurIPS 2021 .

July

2021

One paper on multi-agent forecasting accepted to ICCV 2021 .

May

2021

Starting my internship at NVIDIA AI.

Apr

2021

Invited Talk at ETH Zurich, Computer Vision and Learning Group.

Apr

2021

Invited Talk at "Machine Learning and Optimal Control" class, University of Alabama.

Mar

2021

Received an outstanding reviewer award by ICLR 2021 .

Feb

2021

One paper on physically-plausible human pose estimation accepted to CVRP 2021 with an Oral Presentation .

Feb

2021

One paper accepted to ICRA 2021 .

Feb

2021

Invited Talk at 16th CSL student conference .

Feb

2021

Invited Talk at UIUC Robotics Seminar.

Dec

2020

Invited Talks at Qualcomm and Wayve.

Dec

2020

Honored to receive 2021 NVIDIA Graduate Fellowship .

Sep

2020

One paper on Residual Force Control accepted to NeurIPS 2020 .

Aug

2020

Honored to receive 2020 Qualcomm Innovation Fellowship .

July

2020

Two papers accepted to ECCV 2020 .

May

2020

Starting my internship at Facebook Reality Lab Pittsburgh.

Feb

2020

Two papers accepted to CVPR 2020 , one with an Oral Presentation .

Dec

2019

Paper Diverse Trajectory Forecasting with Determinantal Point Processes accepted to ICLR 2020 .

July

2019

Paper Ego-Pose Estimation and Forecasting as Real-Time PD Control accepted to ICCV 2019 .

July

2018

Paper 3D Ego-Pose Estimation via Imitation Learning accepted to ECCV 2018 .

Your browser does not support the video tag.

GRAIL: Generating Humanoid Loco-Manipulation from 3D Assets and Video Priors

Tianyi Xie *,

Haotian Zhang *,

Jinhyung Park *,

Zi Wang *,

Bowen Wen ,

Jiefeng Li ,

Xueting Li ,

Qingwei Ben ,

Haoyang Weng ,

Yufei Ye ,

David Minor ,

Tingwu Wang ,

Chenfanfu Jiang ,

Sanja Fidler ,

Jan Kautz ,

Linxi "Jim" Fan ,

Yuke Zhu ,

Zhengyi Luo †,

Umar Iqbal †,

Ye Yuan † (*Co-First Authors, †Project Leads)

arXiv , 2026

Your browser does not support the video tag.

MotionBricks: Scalable Real-Time Motions with Modular Latent Generative Model and Smart Primitives

Tingwu Wang ,

Olivier Dionne,

Michael De Ruyter ,

David Minor ,

Davis Rempe ,

Kaifeng Zhao,

Mathis Petrovich ,

Ye Yuan ,

Chenran Li ,

Zhengyi Luo ,

Brian Robison,

Xavier Blackwell,

Bernardo Antoniazzi,

Xue Bin Peng ,

Yuke Zhu ,

Simon Yuen

SIGGRAPH , 2026

Your browser does not support the video tag.

Kimodo: Scaling Controllable Human Motion Generation

Davis Rempe *,

Mathis Petrovich *,

Ye Yuan ,

Haotian Zhang ,

Xue Bin Peng ,

Yifeng Jiang ,

Tingwu Wang ,

Umar Iqbal ,

David Minor ,

Michael de Ruyter ,

Jiefeng Li ,

Chen Tessler ,

Edy Lim,

Eugene Jeong,

Sam Wu,

Ehsan Hassani,

Michael Huang,

Jin-Bey Yu,

Chaeyeon Chung,

Lina Song,

Olivier Dionne,

Jan Kautz ,

Simon Yuen ,

Sanja Fidler (*Equal Contribution)

arXiv , 2026

Your browser does not support the video tag.

CHIP: Adaptive Compliance for Humanoid Control through Hindsight Perturbation

Sirui Chen *,

Zi-Ang Cao *,

Zhengyi Luo ,

Fernando Castañeda ,

Chenran Li ,

Tingwu Wang ,

Ye Yuan ,

Linxi "Jim" Fan ,

C. Karen Liu †,

Yuke Zhu † (*Equal Contribution, †Project Leads)

arXiv , 2025

Your browser does not support the video tag.

SONIC: Supersizing Motion Tracking for Natural Humanoid Whole-Body Control

Zhengyi Luo †,

Ye Yuan †,

Tingwu Wang †,

Chenran Li †,

Sirui Chen *,

Fernando Castañeda *,

Zi-Ang Cao *,

Jiefeng Li *,

David Minor *,

Qingwei Ben *,

Xingye Da *,

Runyu Ding ,

Cyrus Hogg ,

Lina Song ,

Edy Lim ,

Eugene Jeong ,

Tairan He ,

Haoru Xue ,

Wenli Xiao ,

Zi Wang ,

Simon Yuen ,

Jan Kautz ,

Yan Chang ,

Umar Iqbal ,

Linxi "Jim" Fan ‡,

Yuke Zhu ‡ (†Co-First Authors, *Core Contributors, ‡Project Leads)

arXiv , 2025

Your browser does not support the video tag.

VIRAL: Visual Sim-to-Real at Scale for Humanoid Loco-Manipulation

Tairan He *,

Zi Wang *,

Haoru Xue *,

Qingwei Ben *,

Zhengyi Luo ,

Wenli Xiao ,

Ye Yuan ,

Xingye Da ,

Fernando Castañeda ,

Shankar Sastry ,

Changliu Liu ,

Guanya Shi ,

Linxi "Jim" Fan †,

Yuke Zhu † (*Equal Contribution, †Project Leads)

CVPR , 2026

Your browser does not support the video tag.

CARI4D: Category Agnostic 4D Reconstruction of Human-Object Interaction

Xianghui Xie ,

Bowen Wen ,

Yan Chang ,

Hesam Rabeti ,

Jiefeng Li ,

Ye Yuan ,

Gerard Pons-Moll ,

Stan Birchfield

CVPR , 2026

Your browser does not support the video tag.

Dream, Lift, Animate: From Single Images to Animatable Gaussian Avatars

Marcel C. Bühler ,

Ye Yuan ,

Xueting Li ,

Yangyi Huang ,

Koki Nagano ,

Umar Iqbal

3DV , 2026

GENMO: A GENeralist Model for Human MOtion

Jiefeng Li ,

Jinkun Cao ,

Haotian Zhang ,

Davis Rempe ,

Jan Kautz ,

Umar Iqbal ,

Ye Yuan

ICCV , 2025 (Highlight)

Emergent Active Perception and Dexterity of Simulated Humanoids from Visual Reinforcement Learning

Zhengyi Luo ,

Chen Tessler ,

Toru Lin ,

Ye Yuan ,

Tairan He ,

Wenli Xiao ,

Yunrong Guo ,

Gal Chechik ,

Kris Kitani ,

Jim Fan ,

Yuke Zhu

arXiv , 2025

AdaHuman: Animatable Detailed 3D Human Generation with Compositional Multiview Diffusion

Yangyi Huang ,

Ye Yuan ,

Xueting Li ,

Jan Kautz ,

Umar Iqbal

ICCV , 2025

GeoMan: Temporally Consistent Human Geometry Estimation using Image-to-Video Diffusion

Gwanghyun Kim ,

Xueting Li ,

Ye Yuan ,

Koki Nagano ,

Tianye Li ,

Jan Kautz ,

Se Young Chun ,

Umar Iqbal

ICCV , 2025

SimAvatar: Simulation-Ready Avatars with Layered Hair and Clothing

Xueting Li ,

Ye Yuan ,

Shalini De Mello ,

Gilles Daviet ,

Jonathan Leaf ,

Miles Macklin ,

Jan Kautz ,

Umar Iqbal

CVPR , 2025

BLADE: Single-view Body Mesh Learning through Accurate Depth Estimation

Shengze Wang ,

Jiefeng Li ,

Tianye Li ,

Ye Yuan ,

Henry Fuchs ,

Koki Nagano ,

Shalini De Mello ,

Michael Stengel

CVPR , 2025

Harmon: Whole-Body Motion Generation of Humanoid Robots from Language Descriptions

Zhenyu Jiang ,

Yuqi Xie ,

Jinhan Li ,

Ye Yuan ,

Yifeng Zhu ,

Yuke Zhu

CoRL , 2024

SMPLOlympics: Sports Environments for Physically Simulated Humanoids

Zhengyi Luo ,

Jiashun Wang ,

Kangni Liu ,

Haotian Zhang ,

Chen Tessler ,

Jingbo Wang ,

Ye Yuan ,

Jinkun Cao ,

Zihui Lin ,

Fengyi Wang ,

Jessica Hodgins ,

Kris Kitani

arXiv , 2024

COIN: Control-Inpainting Diffusion Prior for Human and Camera Motion Estimation

Jiefeng Li ,

Ye Yuan ,

Davis Rempe ,

Haotian Zhang ,

Pavlo Molchanov ,

Cewu Lu ,

Jan Kautz ,

Umar Iqbal

ECCV , 2024

AGG: Amortized Generative 3D Gaussians for Single Image to 3D

Dejia Xu ,

Ye Yuan ,

Morteza Mardani ,

Sifei Liu ,

Jiaming Song ,

Zhangyang Wang ,

Arash Vahdat

TMLR , 2024

Your browser does not support the video tag.

GAvatar: Animatable 3D Gaussian Avatars with Implicit Mesh Learning

Ye Yuan ,

Xueting Li ,

Yangyi Huang ,

Shalini De Mello ,

Koki Nagano ,

Jan Kautz ,

Umar Iqbal (*Equal Contribution)

CVPR , 2024 (Highlight)

PACER+: On-Demand Pedestrian Animation Controller in Driving Scenarios

Jingbo Wang ,

Zhengyi Luo ,

Ye Yuan ,

Yixuan Li ,

Bo Dai

CVPR , 2024

Your browser does not support the video tag.

PACE: Human and Camera Motion Estimation from in-the-wild Videos

Muhammed Kocabas ,

Ye Yuan ,

Pavlo Molchanov ,

Yunrong Guo ,

Michael Black ,

Otmar Hilliges ,

Jan Kautz ,

Umar Iqbal

3DV , 2024 (Spotlight Presentation)

Your browser does not support the video tag.

PhysDiff: Physics-Guided Human Motion Diffusion Model

Ye Yuan ,

Jiaming Song ,

Umar Iqbal ,

Arash Vahdat ,

Jan Kautz

ICCV , 2023 (Oral Presentation)

Your browser does not support the video tag.

Learning Human Dynamics in Autonomous Driving Scenarios

Jingbo Wang ,

Ye Yuan ,

Zhengyi Luo ,

Kevin Xie ,

Dahua Lin ,

Umar Iqbal ,

Sanja Fidler ,

Sameh Khamis

ICCV , 2023

Your browser does not support the video tag.

Learning Physically Simulated Tennis Players from Broadcast Videos

Haotian Zhang ,

Ye Yuan ,

Viktor Makoviychuk ,

Yunrong Guo ,

Sanja Fidler ,

Xue Bin Peng ,

Kayvon Fatahalian

SIGGRAPH , 2023 (Best Paper Honorable Mention)

Your browser does not support the video tag.

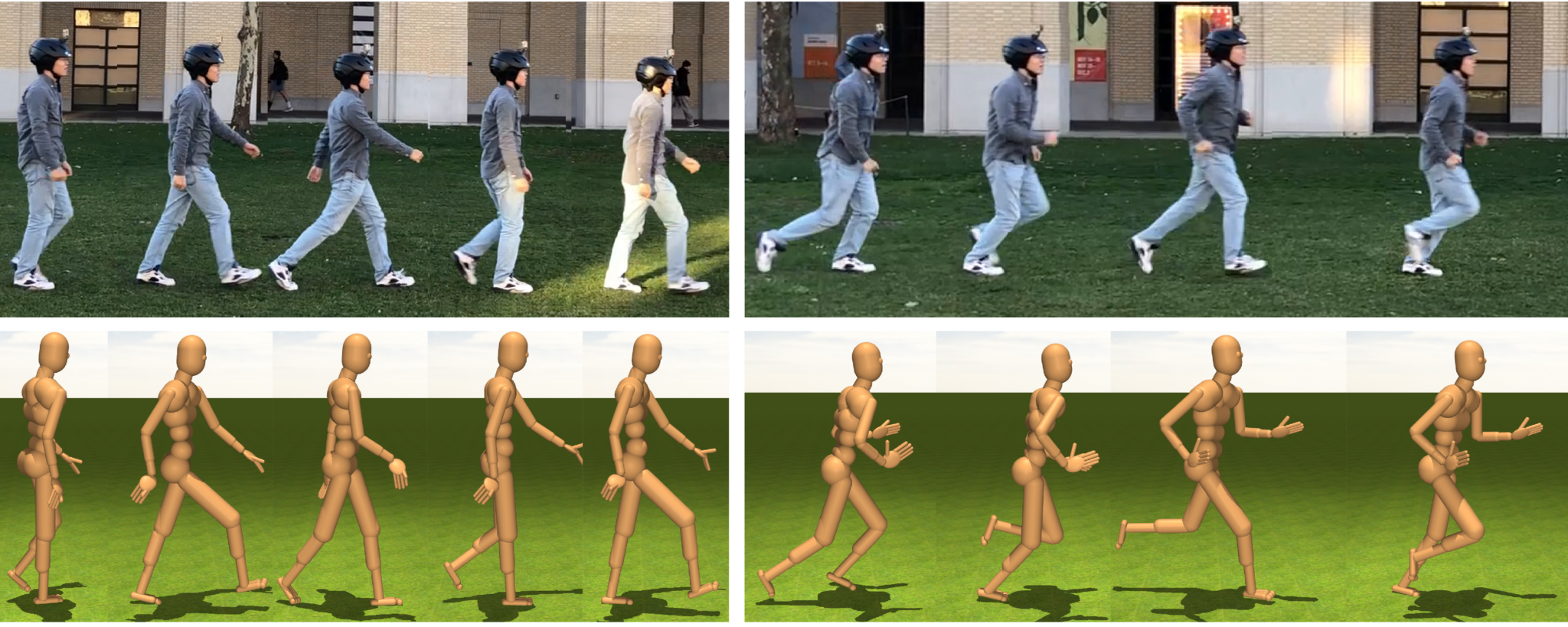

Trace and Pace: Controllable Pedestrian Animation via Guided Trajectory Diffusion

Davis Rempe ,

Zhengyi Luo ,

Xue Bin Peng ,

Ye Yuan ,

Kris Kitani ,

Sanja Fidler ,

Or Litany

CVPR , 2023

RGB-Only Reconstruction of Tabletop Scenes for Collision-Free Manipulator Control

Zhenggang Tang ,

Balakumar Sundaralingam ,

Jonathan Tremblay ,

Bowen Wen ,

Ye Yuan ,

Stephen Tyree ,

Charles Loop ,

Alexander Schwing ,

Stan Birchfield

ICRA , 2023

Your browser does not support the video tag.

Embodied Scene-aware Human Pose Estimation

Zhengyi Luo ,

Shun Iwase ,

Ye Yuan ,

Kris Kitani

NeurIPS , 2022

Unified Simulation, Perception, and Generation of Human Behavior

Ye Yuan

Ph.D. Thesis, Robotics Institute, CMU, 2022

Your browser does not support the video tag.

GLAMR: Global Occlusion-Aware Human Mesh Recovery with Dynamic Cameras

Ye Yuan ,

Umar Iqbal ,

Pavlo Molchanov ,

Kris Kitani ,

Jan Kautz

CVPR , 2022 (Oral Presentation - Top 4.2%)

Your browser does not support the video tag.

Transform2Act: Learning a Transform-and-Control Policy for Efficient Agent Design

Ye Yuan ,

Yuda Song ,

Zhengyi Luo ,

Wen Sun ,

Kris Kitani

ICLR , 2022 (Oral Presentation - Top 1.6%)

Online No-regret Model-Based Meta RL for Personalized Navigation

Yuda Song ,

Ye Yuan ,

Wen Sun ,

Kris Kitani

Learning for Dynamics & Control (L4DC) , 2022

Dynamics-Regulated Kinematic Policy for Egocentric Pose Estimation

Zhengyi Luo ,

Ryo Hachiuma ,

Ye Yuan ,

Kris Kitani

NeurIPS , 2021

AgentFormer: Agent-Aware Transformers for Socio-Temporal Multi-Agent Forecasting

Ye Yuan ,

Xinshuo Weng ,

Yanglan Ou ,

Kris Kitani

ICCV , 2021

SimPoE: Simulated Character Control for 3D Human Pose Estimation

Ye Yuan ,

Shih-En Wei ,

Tomas Simon ,

Kris Kitani ,

Jason Saragih

CVPR , 2021 (Oral Presentation - Top 4.2%)

PTP: Parallelized 3D Tracking and Prediction with Graph Neural Networks and Diversity Sampling

Xinshuo Weng *,

Ye Yuan *,

Kris Kitani (*Equal Contribution)

RA-L and ICRA , 2021 (Best Student Paper Candidate < 2%)

Your browser does not support the video tag.

Residual Force Control for Agile Human Behavior Imitation and Extended Motion Synthesis

Ye Yuan ,

Kris Kitani

NeurIPS , 2020

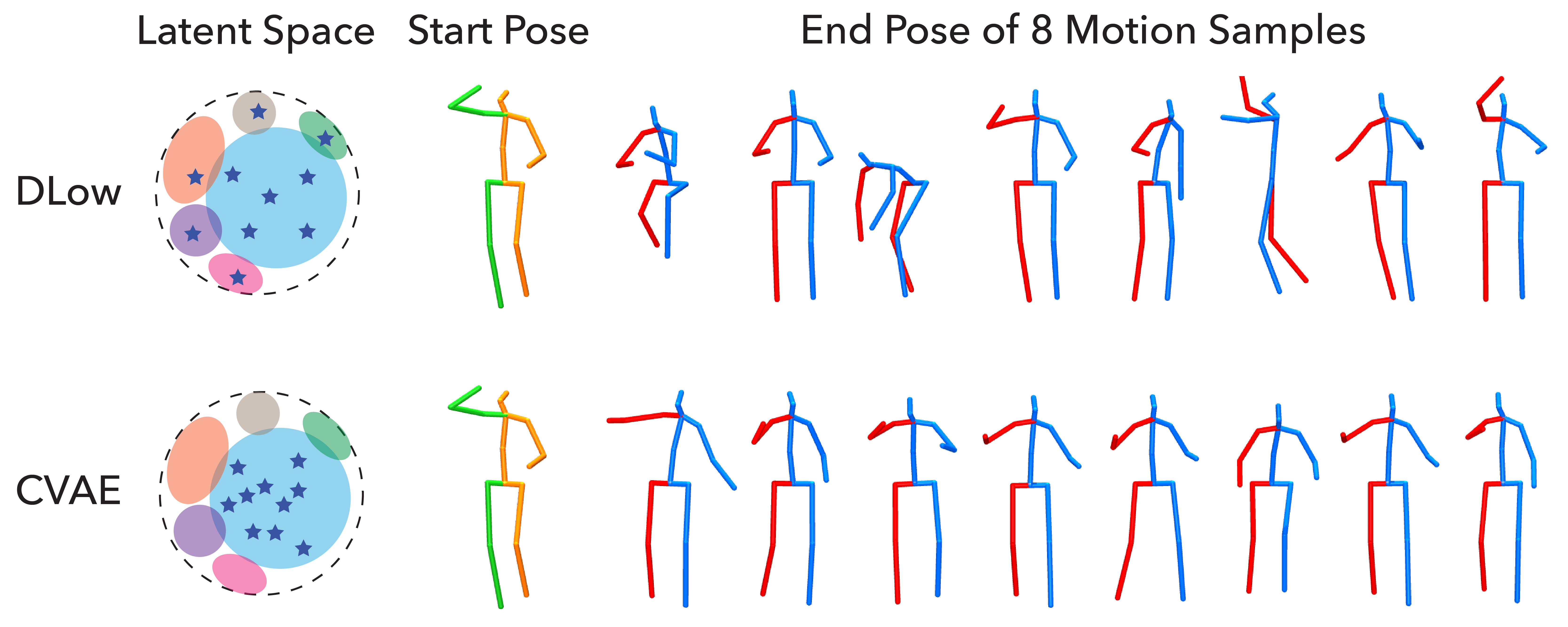

DLow: Diversifying Latent Flows for Diverse Human Motion Prediction

Ye Yuan ,

Kris Kitani

ECCV , 2020

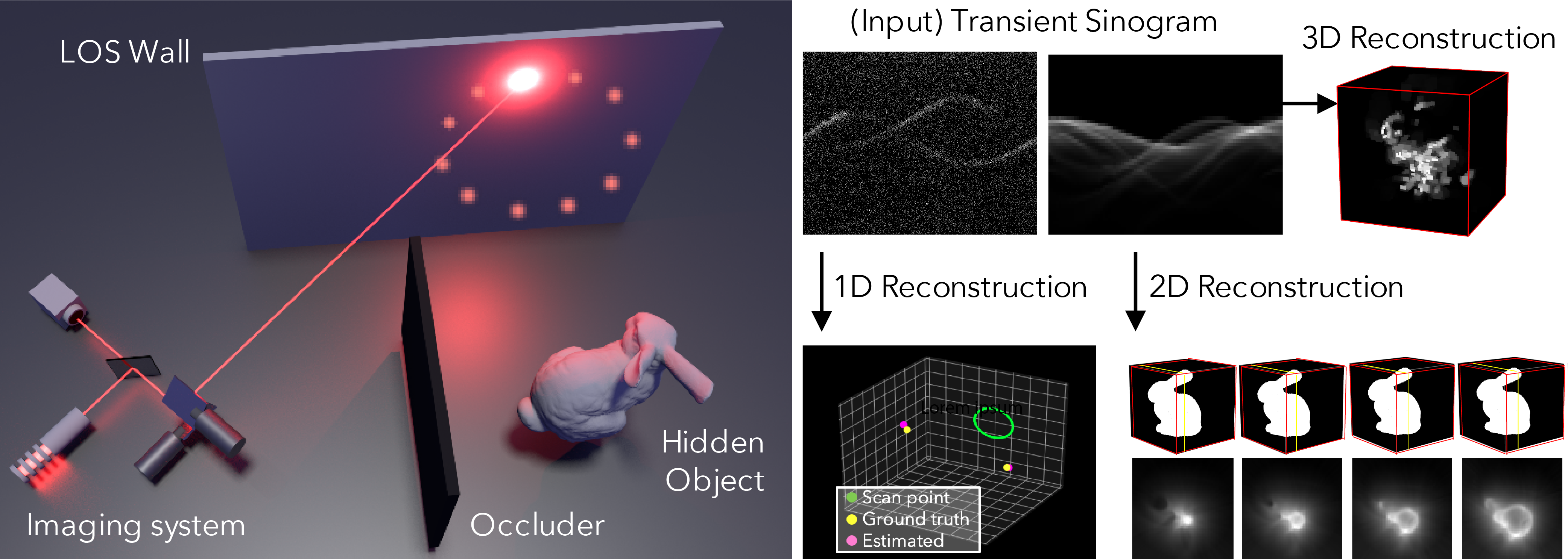

Efficient Non-Line-of-Sight Imaging from Transient Sinograms

Mariko Isogawa ,

Dorian Yao Chan ,

Ye Yuan ,

Kris Kitani ,

Matthew O'Toole

ECCV , 2020

Your browser does not support the video tag.

Diverse Trajectory Forecasting with Determinantal Point Processes

Ye Yuan ,

Kris Kitani

ICLR , 2020

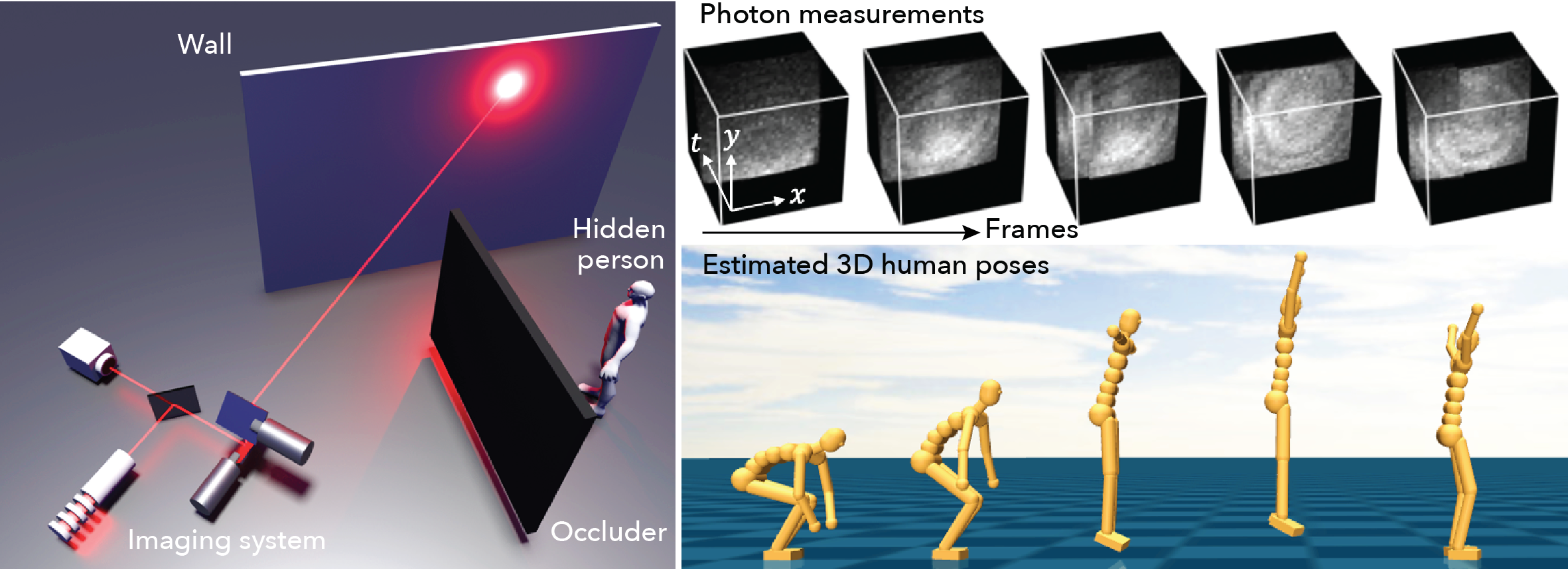

Optical Non-Line-of-Sight Physics-based 3D Human Pose Estimation

Mariko Isogawa ,

Ye Yuan ,

Matthew O'Toole ,

Kris Kitani

CVPR , 2020

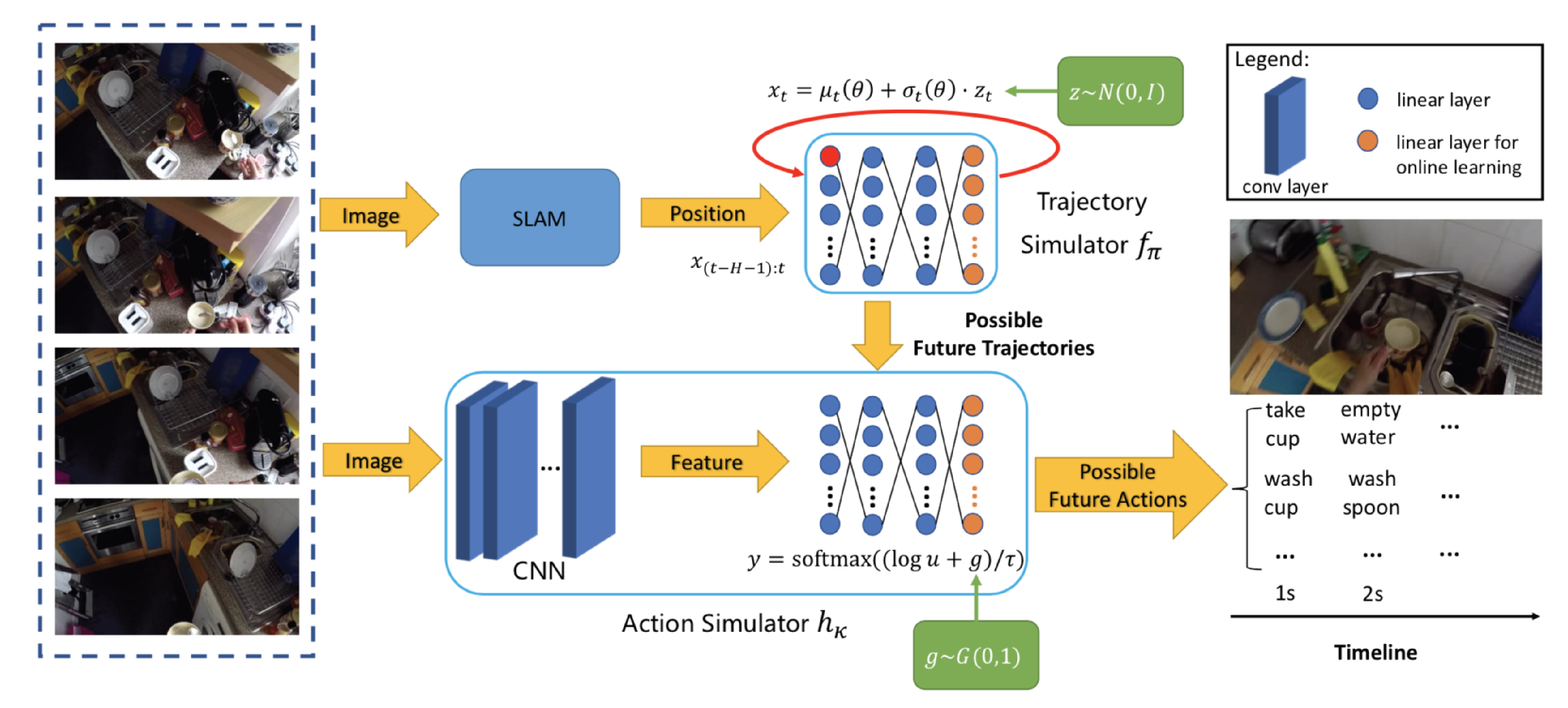

Generative Hybrid Representations for Activity Forecasting with No-Regret Learning

Jiaqi Guan ,

Ye Yuan ,

Kris Kitani ,

Nick Rhinehart

CVPR , 2020 (Oral Presentation - Top 5.7%)

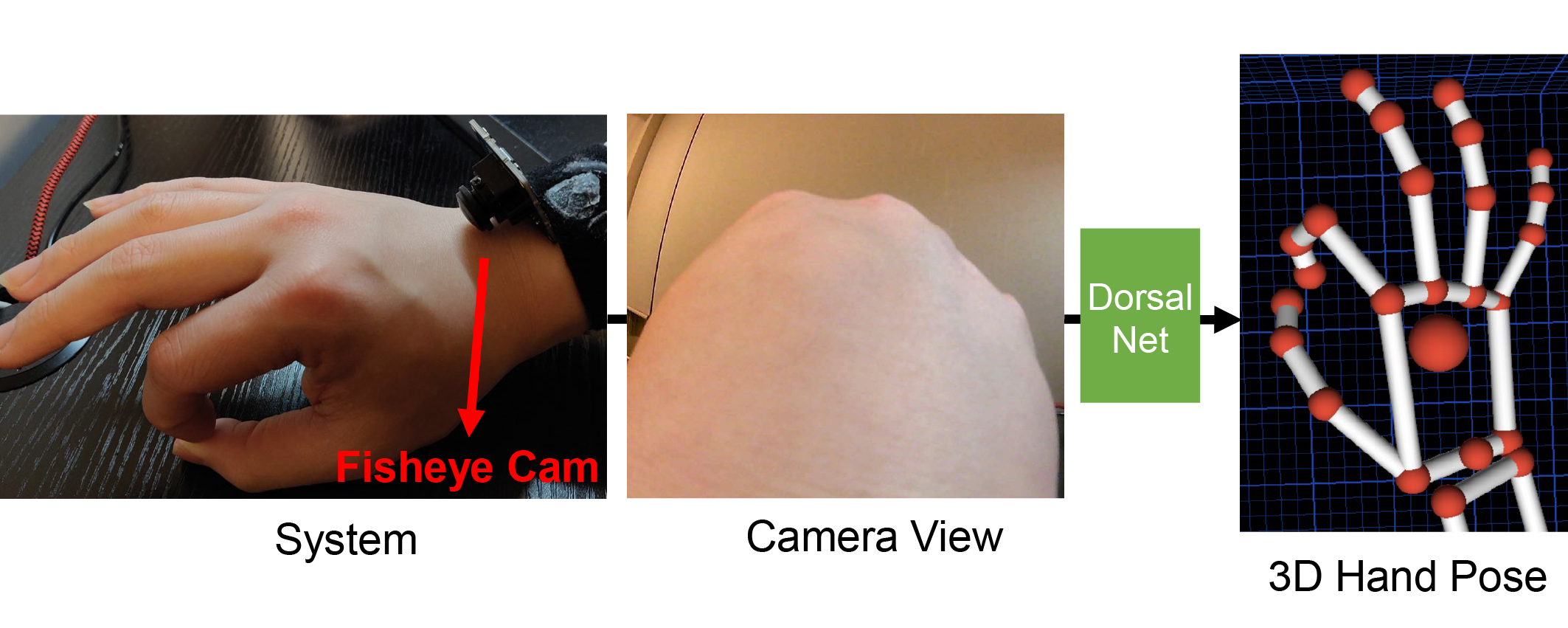

Back-Hand-Pose: 3D Hand Pose Estimation for a Wrist-worn Camera via Dorsum Deformation Network

Erwin Wu ,

Ye Yuan ,

Hui-Shyong Yeo ,

Aaron Quigley ,

Hideki Koike ,

Kris Kitani

ACM Symposium on User Interface Software and Technology (UIST) , 2020

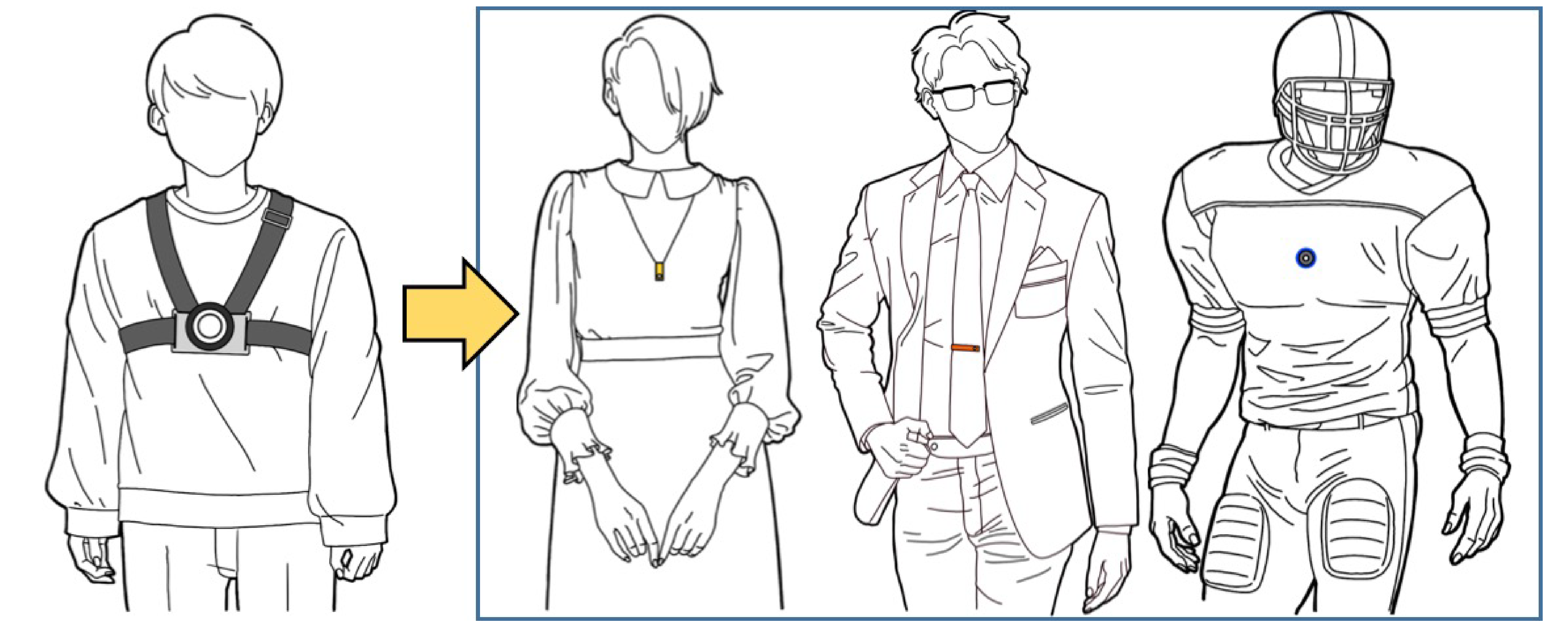

MonoEye: Multimodal Human Motion Capture System Using A Single Ultra-Wide Fisheye Camera

Dong-Hyun Hwang ,

Kohei Aso ,

Ye Yuan ,

Kris Kitani ,

Hideki Koike

ACM Symposium on User Interface Software and Technology (UIST) , 2020

Your browser does not support the video tag.

Ego-Pose Estimation and Forecasting as Real-Time PD Control

Ye Yuan ,

Kris Kitani

ICCV , 2019

3D Ego-Pose Estimation via Imitation Learning

Ye Yuan ,

Kris Kitani

ECCV , 2018

Your browser does not support the video tag.

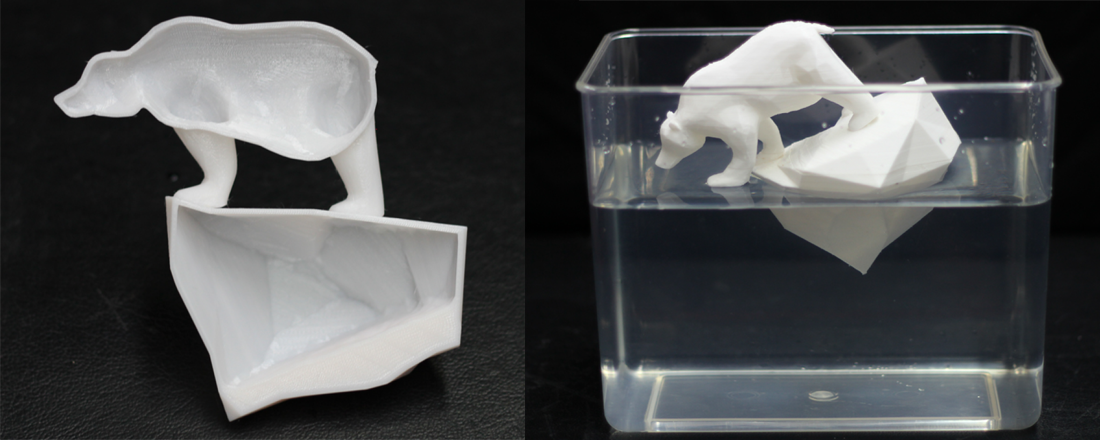

Computational Design of Transformables

Ye Yuan ,

Changxi Zheng ,

Stelian Coros

ACM SIGGRAPH/Eurographics Symposium on Computer Animation (SCA) , 2018

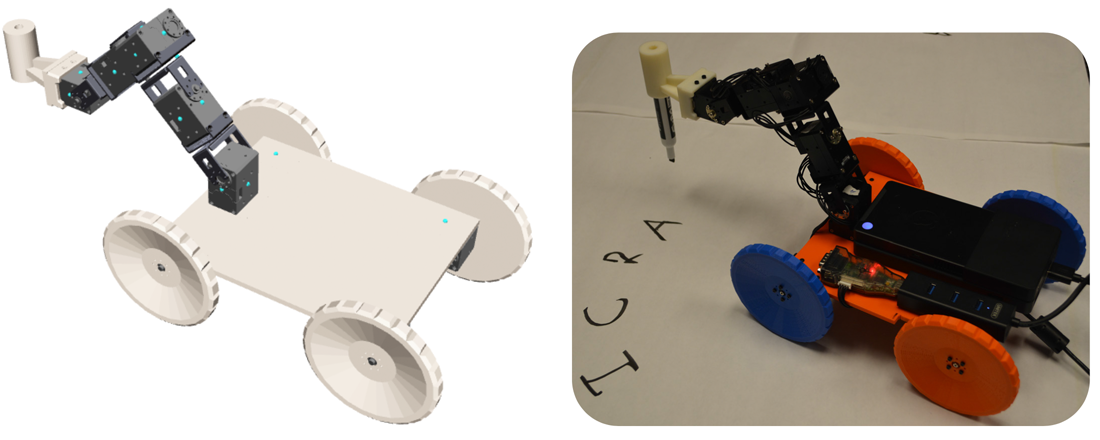

Computational Abstractions for Interactive Design of Robotic Devices

Ruta Desai ,

Ye Yuan ,

Stelian Coros

ICRA , 2017

Continuous Optimization of Interior Carving in 3D Fabrication

Ye Yuan ,

Xiang Chen ,

Changxi Zheng ,

Kun Zhou

Frontiers of Computer Science , 2017

Area Chair

CVPR

Conference Reviewer

NeurIPS, ICML, ICLR, CVPR, ICCV, ECCV, AAAI, ICRA, SIGGRAPH, Eurographics

Journal Reviewer

JMLR, TMLR, TPAMI, TIP, RA-L